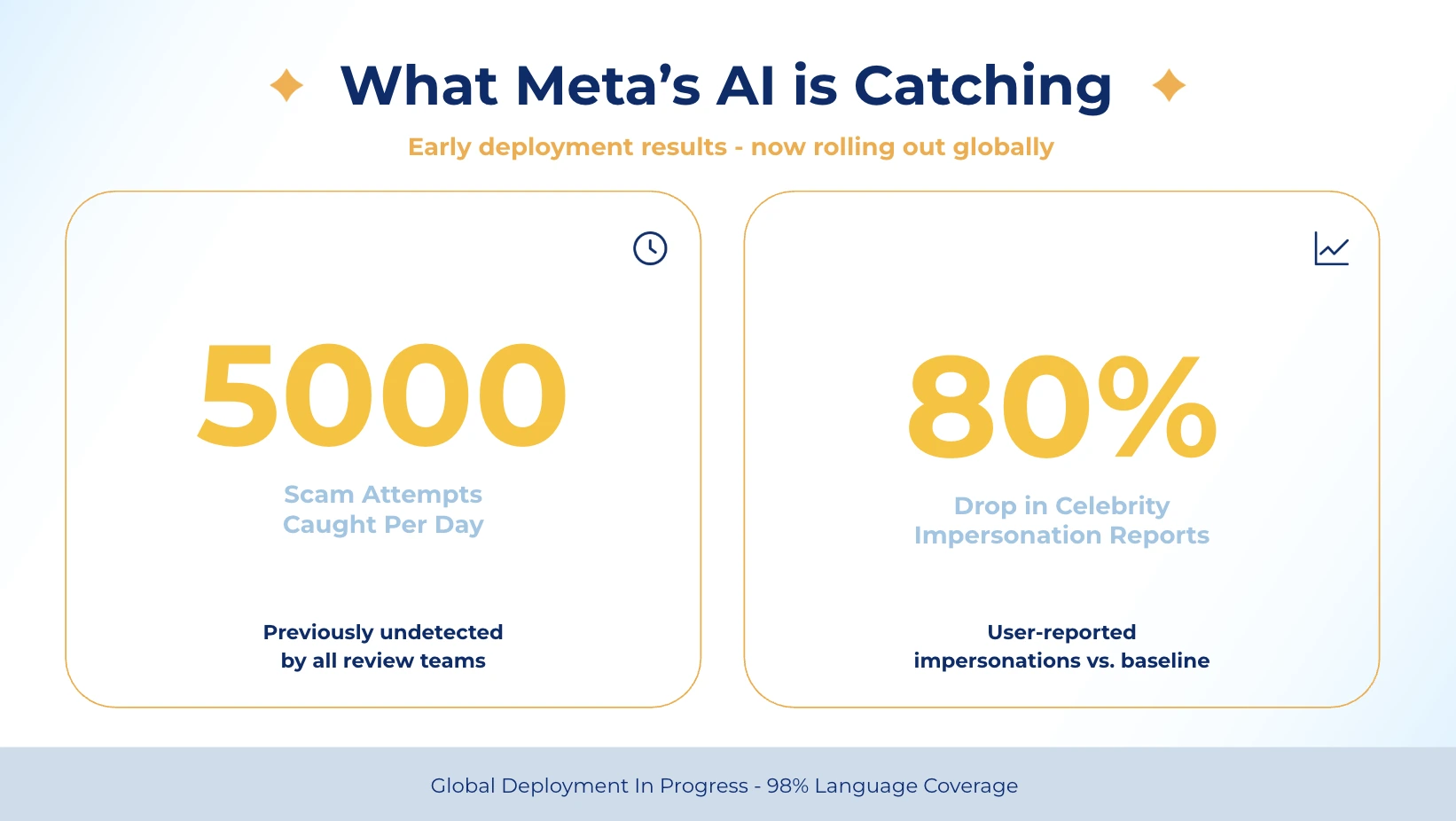

Meta just announced a significant overhaul to how it polices content across Facebook and Instagram, and if you’re running paid campaigns, this isn’t a press release you skim and forget. The platform is deploying more advanced AI systems built to catch violations that human review teams consistently miss: scams, impersonation, account takeovers, and fraudulent ads. In early tests, these systems are already flagging 5,000 scam attempts per day that no existing review team had caught before.

For performance marketers, this is a dual signal. The cleanup of bad actors and low-quality ad inventory is broadly good for ad ecosystem health and brand safety. But it also means tighter enforcement infrastructure, and a higher chance that legitimate campaigns encounter friction if creative or targeting patterns resemble violation profiles. Understanding what Meta’s AI can now detect, and what it means for your ad account health, is no longer optional background reading. This article breaks down exactly what’s changing, what the data says, and how to position your campaigns for a stricter enforcement environment.

What Meta’s New AI Actually Does Differently

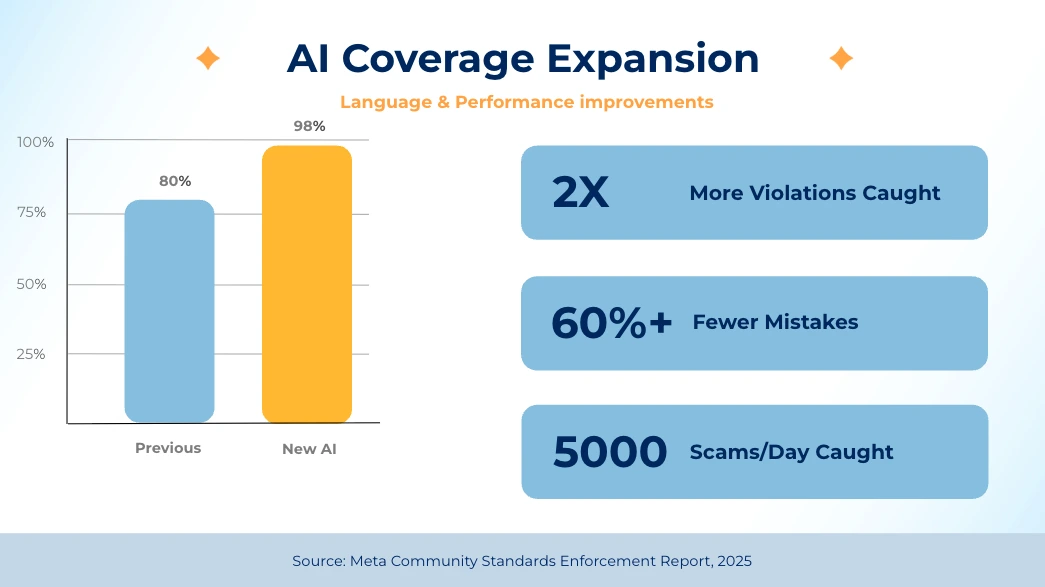

Meta AI content enforcement has been operationally limited by one blunt constraint for years: language. Human review teams and legacy AI systems covered roughly 80 languages — leaving massive blind spots across Southeast Asia, the Middle East, Africa, and Latin America. The new generation of AI systems covers languages spoken by 98% of people online. That gap closure is not incremental. It restructures which markets can be effectively policed and which violation types can be caught at scale.

The architecture shift goes beyond language. Earlier moderation systems largely reviewed individual signals in isolation, a changed password, a new login location, an edited profile. These signals look routine when reviewed one by one. The new AI analyses clusters of behavioural signals in context, which allows it to identify coordinated patterns that no single trigger would surface. Meta’s example: detecting an account takeover by combining a new access location, a password change, and a profile edit, signals that, individually, appear harmless but together constitute a threat pattern the AI recognises with high confidence.

This contextual reasoning capability is what allows the system to outperform human teams in specific categories. On adult sexual solicitation content, the AI catches twice as many violations as review teams, while cutting its own error rate by more than 60%. That ratio — more catches, fewer mistakes — is the core promise of the upgrade. Legacy AI enforcement traded recall for precision. The new systems are claiming both.

The core takeaway: Meta’s AI can now see what human moderators couldn’t — across more markets, more languages, and more behavioural contexts than ever before.

The Scam and Impersonation Crackdown — And Why It Matters for Your Ad Account

The numbers Meta published are specific enough to demand attention. The new AI is identifying and mitigating 5,000 scam attempts per day that previously slipped through all existing review. On impersonation, user reports of the most-impersonated celebrities dropped by more than 80% after the system launched. These aren’t aspirational figures in a press release — they’re early test results that Meta is now deploying at scale.

For performance marketers, this matters in two distinct ways. First, the positive: cleaner inventory. Scammers and impersonators occupy ad placements, Audience Network inventory, and organic reach that competes with your legitimate campaigns. As these accounts are removed at higher rates, ad auction quality improves and the trust signal of the platform strengthens. Audiences that have been burned by scam ads become more sceptical of everything — including legitimate offers. Meta reducing scam prevalence is a rising tide that benefits compliant advertisers.

Second, the risk: enforcement proximity. The same AI systems making judgements about scam patterns — unusually low prices, suspicious domain structures, mismatched brand signals — are also evaluating your ads. Meta explicitly named an example: a fake sporting goods site detected by the combination of a real logo used with unusually low prices and a suspicious web address. If your campaign uses aggressive discount messaging, third-party brand elements, or redirects through unfamiliar domains, the detection logic that catches fraudsters may also flag your creative for review.

The mitigation is straightforward but requires discipline: keep your ad domain, landing page URL, creative, and brand identity entirely consistent. Discount messaging should be anchored to a percentage or specific price — not open-ended urgency. And any use of third-party brand marks should be legally cleared and visually secondary to your own brand.

The answer is simple: the AI that catches scammers uses the same signals it uses to evaluate your ads. Consistency between your domain, creative, and offer is now a brand safety requirement, not just good practice.

The Ad Fraud Reduction Signal You Should Be Tracking

Buried in the announcement is a figure that should be on every media buyer’s radar: after the new AI was tested against fake and fraudulent ads, views of ads containing scams and other serious violations dropped by 7%. That is a measurable reduction in fraudulent ad impressions — which translates directly into a change in auction dynamics.

The current state of digital advertising includes a non-trivial percentage of ad spend competing against fraudulent inventory. Scam advertisers don’t follow bid discipline — they spend aggressively because their economics don’t require real ROI. Removing them from the auction reduces artificial bid inflation in affected verticals. For legitimate advertisers in financial services, e-commerce, software, and travel — the medium-term effect is lower CPMs and more qualified inventory.

This is not an immediate shift. Meta is “beginning to deploy” these systems, which implies a rollout over months. But the direction is clear: the platform is investing structurally in ad quality at the inventory level. For campaign planning, this is the right time to increase investment in Meta placements previously avoided due to brand safety concerns — particularly Audience Network and Reels, where scam adjacency has been a common objection.

How to track whether this is working for your account: monitor your brand safety reporting inside Ads Manager under Delivery Insights → Audience. Watch for changes in delivery composition across placement types. If Audience Network CPMs begin rising while performance holds, that’s a signal that inventory quality is improving.

The 7% reduction in fraudulent ad views is an early signal of structural inventory cleanup — monitor your placement mix and CPM trends in previously-avoided placements over the next quarter.

Enforcement Framework & How to Keep Your Campaigns Compliant

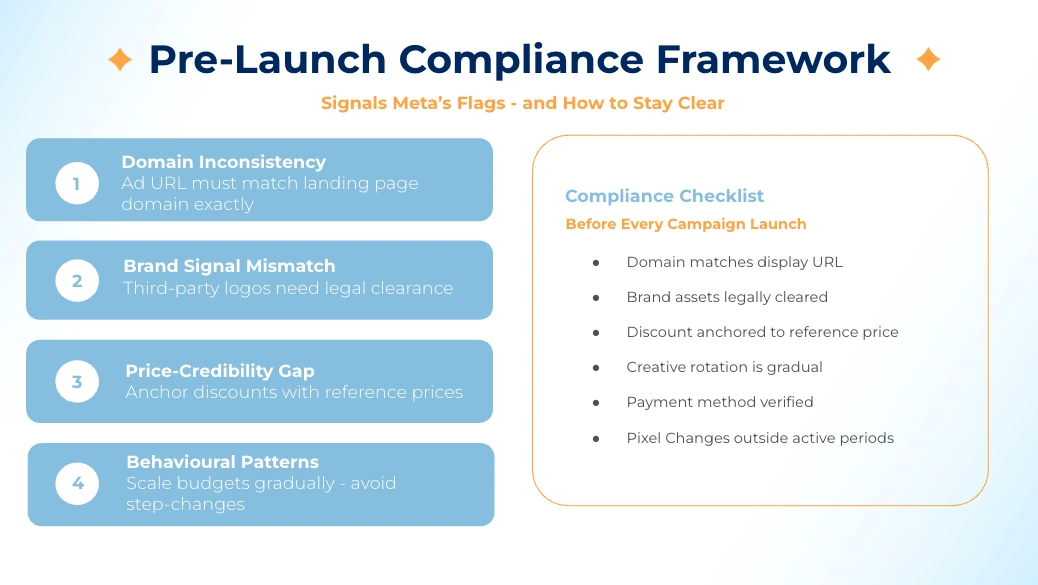

Understanding the enforcement logic lets you build a simple pre-launch compliance framework. Meta’s AI is not reading your campaign brief — it’s pattern-matching against known violation clusters. The patterns that trigger review overlap with tactics that many aggressive performance campaigns legitimately use. The fix is not to eliminate urgency or competitive messaging. It’s to separate the signals that your ad shares with scam content.

The four risk signals Meta’s AI is trained on:

- Domain inconsistency — Your ad domain doesn’t match your landing page domain, or uses a redirect chain. Use a clean, direct domain match.

- Brand signal mismatch — Recognised brand logos or names used in ways inconsistent with the brand’s own advertising patterns. Flag for any affiliate or comparison campaigns that include competitor branding.

- Price-to-credibility gap — Offers priced significantly below market rate without a clear credibility signal. Aggressive discount campaigns should be anchored with social proof above the fold.

- Behavioural account patterns — Sudden budget spikes, new payment methods, rapid creative rotation overlap with what scam accounts do when scaling aggressively. Scale in increments rather than step-changes.

The compliance framework is not about being conservative — it’s about separating your signal from the noise that triggers review, so your campaigns launch and run without interruption.

The Measurement Transparency Play

One underreported element of this announcement: Meta is also using AI to improve how it measures the prevalence of violating content. They acknowledge that changes in measurement methodology — not actual changes in violation rates — caused the apparent increase in Instagram adult nudity and sexual activity prevalence. The old number wasn’t the truth; the new number is a better estimate of existing reality.

This matters because future Community Standards Enforcement Reports will reflect more accurate baselines, not necessarily worse outcomes. If you use these reports for brand safety decision-making, the transition quarter will produce figures that look noisier than they are. Apply a longer trend window — at least two quarters — before drawing conclusions from changes in prevalence metrics. Meta confirmed that their use of AI tools for measurement will be reflected in their next report — meaning the next CSER should be treated as a new baseline, not a continuation of the old series.

Key Takeaways

- Language coverage: Meta AI now covers 98% of global online languages, up from ~80. Enforcement blind spots in non-English markets are closing fast.

- Scam detection: 5,000 previously-undetected scam attempts are flagged daily. This improves inventory quality but applies the same logic to your ads.

- Impersonation crackdown: User reports of celebrity impersonation dropped 80%+. Affiliate and comparison campaigns using brand assets are under higher scrutiny.

- Ad fraud signal: Views of scam ads dropped 7% in early tests. Expect auction dynamics to shift in high-fraud verticals over the next 2 quarters.

- AI detection logic: Pattern-matching on domain inconsistency, brand mismatches, price credibility gaps, and behavioural account signals.

- Measurement change: Instagram violation prevalence numbers changed due to methodology, not actual increases. Wait two reporting periods before reading trend direction.

- Your action now: Audit domain consistency, anchor discount claims to credible reference points, and scale accounts gradually rather than in sudden steps.

Ready to Scale Your Performance Marketing? Let’s Talk.

Meta’s enforcement landscape is getting smarter — and so should your campaign strategy. Coinis helps performance marketers build ad accounts that scale without friction, with creative and compliance frameworks built for how the platform actually works.

No sales pitch. Just real performance insights tailored to your campaigns.